Intent Training and Testing

Training a model with your training corpus allows your bot to discern what users say (or in some cases, are trying to say).

You can improve the acuity of the cognition through rounds of intent testing and intent training. You control the training through the intent definitions alone; the skill can’t learn on its own from the user chat.

Testing Utterances

We recommend that you set aside 20% percent of your corpus for intent testing and use the remaining 80% to train your intents. Keep these two sets separate so that the test utterances, which you incorporate into test cases, remain "unknown" to your skill.

Apply the 80/20 split to the each intent's data set. Randomize your utterances before making this split to allow the training models to weigh the terms and patterns in the utterances equally.

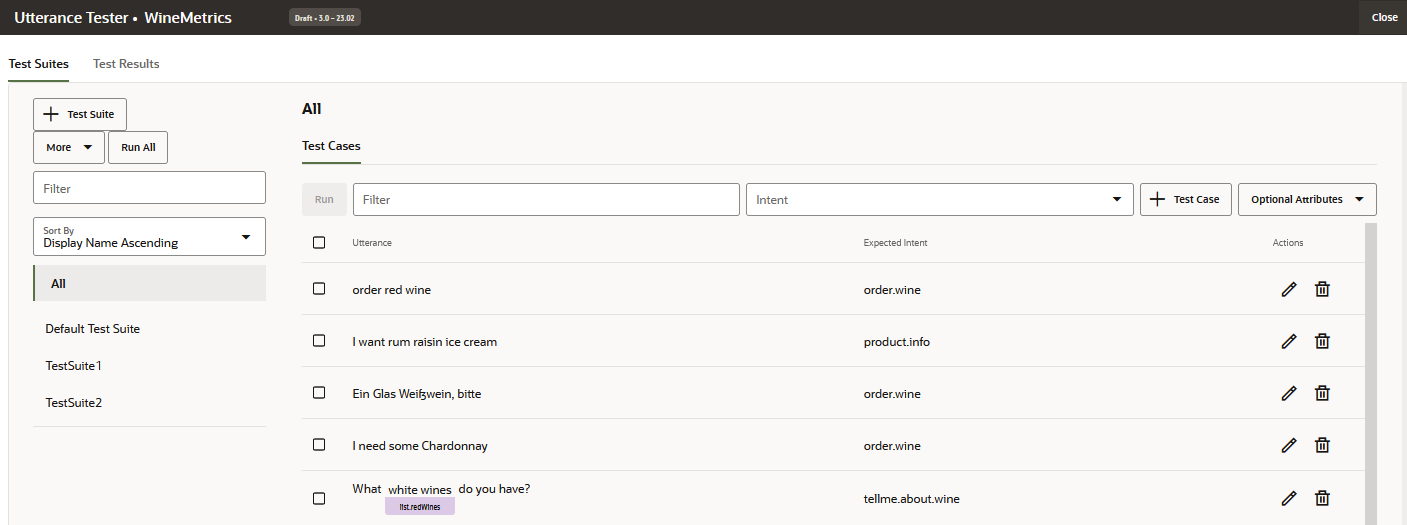

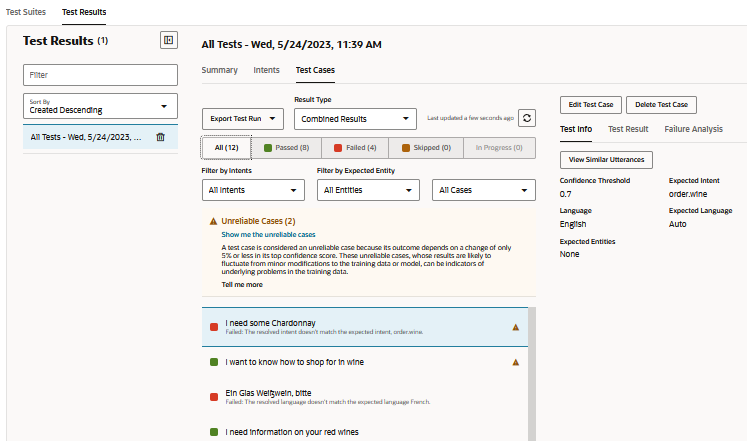

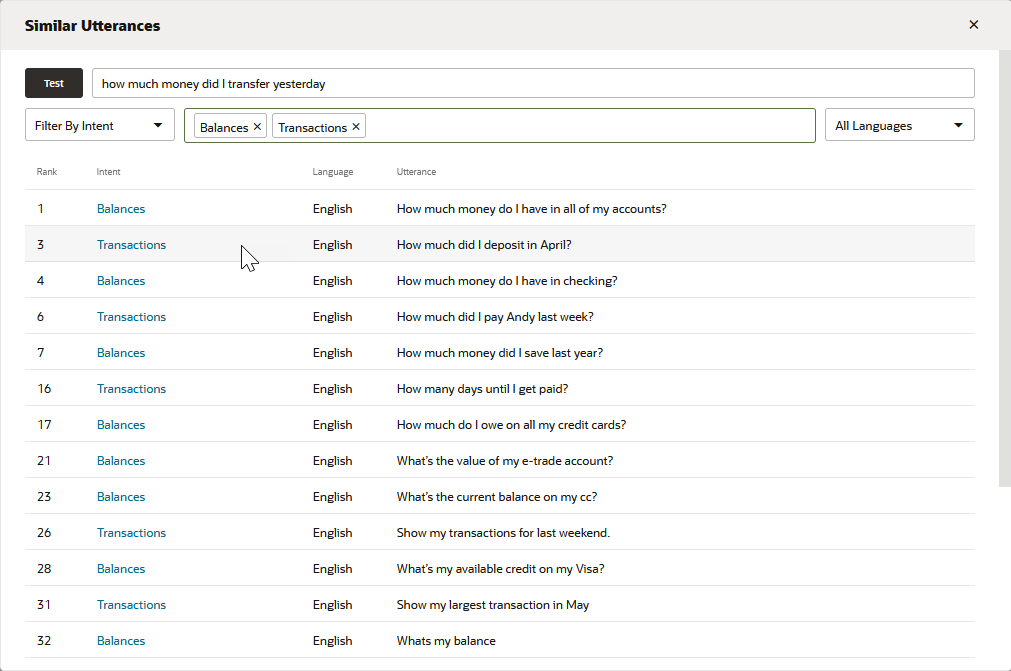

The Utterance Tester

The Utterance Tester is your window to your skill's cognition. By entering phrases

that are not part of the training corpus, you can find out how well you've crafted your

intents by reviewing the intent confidence ranking and the returned JSON. This ranking,

which is the skill's estimate for the best candidate to resolve the user input,

demonstrates its acuity at the current time.

Description of the illustration utterance-tester-quick-test.png

Using the Utterance Tester, you can perform quick tests for one-off testing, or you can incorporate an utterance as a test case to gauge intent resolution across different versions of training models.

Test Cases

Each test has an utterance and the intent that it's expected to resolve to, which is known as a label match. A test case can also include matching entity values and the expected language for the utterance. You can run test cases when you’re developing a skill and later on, when the skill is in production, you can use the test cases for regression testing. In the latter case, you can run test cases to find out if a new release of the training model has negatively affected intent resolution.

Like the test cases that you create with the Conversation Tester, utterance test cases are part of the skill and are carried along with each version. If you extend a skill, then the extension inherits the test cases. Whereas conversation test cases are intended to test a scenario, utterance test cases are intended to test fragments of a conversation independently, ensuring that each utterance resolves to the correct intent.

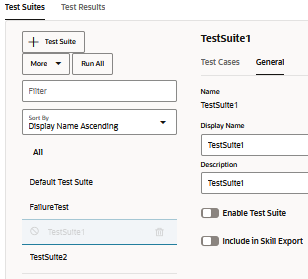

Manage Test Cases

nluTestSuites folder that houses the skill's test suites when the

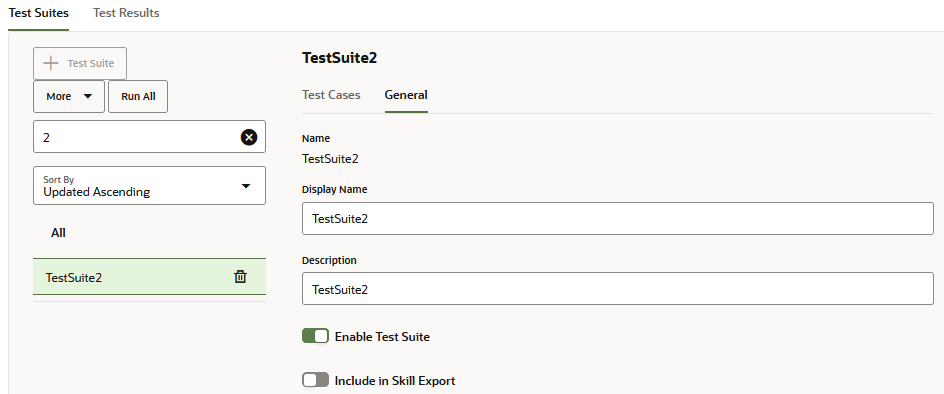

skill is exported.Create Test Suites

- Click + Test Suite.

- In the General tab, replace the placeholder name (TestSuite0001, for example) with a more meaningful one by adding a value in the Display Name field.

- Optionally, add a description that explains the functionality that's covered by the test suite.

- Populate the test suite with test cases using any (or a combination of ) the

following methods:

- Manually adding test cases (either by creating a test case or by saving an utterance as a test case from the Utterance Tester).

- Importing test cases.

Note

To assign a test case to a test suite via import, the CSV'stestSuitefield can either be empty, or must contain a name that matches the test suite that's selected in the import dialog. - Editing a test case to reassign its test suite.

- If you want to exclude the test suite from test runs that are launched using the All and Run All options, switch off Enable Test Suite.

- If you don't want the test suite included with the skill export, switch off

Include in Skill Export. When you switch off this option for a

test suite, it won't be included in the

nluTestSuitesfolder that houses the skill's test suites in the exported ZIP file.

Create Utterance Test Cases

You can add test cases one-by-one using either Utterance Tester or the New Test Case dialog (accessed by clicking + Test Case), or you can add them in bulk by uploading a CSV.

Each test case must belong to a test suite, so before you create a test case, you may want to first create a test suite that reflects a capability of the skill, or some aspect of intent testing, such as failure testing, in-domain testing, or out-of-domain testing.

Tip:

To provide adequate coverage in your testing, create test suite utterances that are not only varied conceptually, but also grammatically since users will not make requests in a uniform fashion. You can add these dimensions by creating test suites from actual user message that have been queried in the Insights Retrainer and also from crowd-sourced input gathered from Data Manufacturing.Add Test Cases from the Utterance Tester

- Click Test Utterances.

- If the skill is multi-lingual, select the native language.

- Enter the utterance then click Test.

- Click Save as Test Case then choose a test suite.

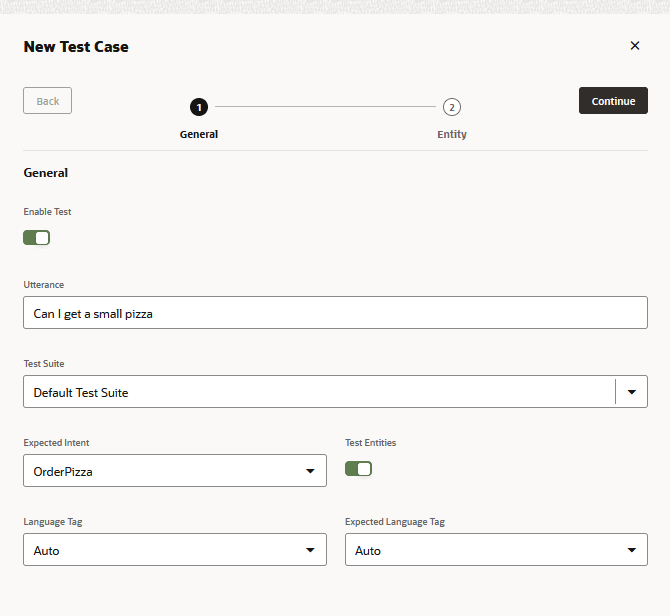

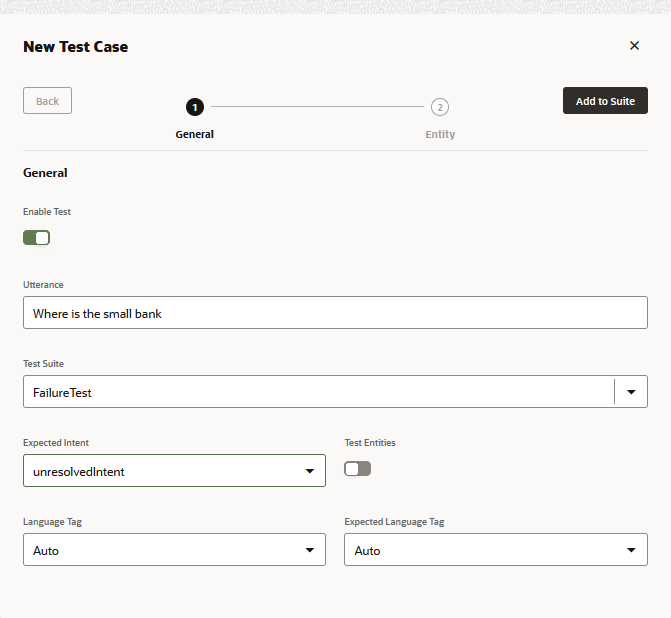

Create a Test Case

- Click Go to Test Cases in the Utterance Tester.

- Click + Test Case.

- Complete the New Test Case dialog:

- If needed, disable the test case.

- Enter the test utterance.

- Select the test suite.

- Select the expected intent. If you're creating a test case for failure testing, select unresolvedIntent.

- For multi-lingual skills, select the language tag and the expected language.

- Click Add to Suite. From the Test Cases

page, you can delete a test case, or edit a test case, which includes reassigning the test

case to a different test suite.

- To test for entity values:

- Switch on Test Entities. Then click Continue.

- Highlight the word (or words) and

then apply an entity label to it by selecting an entity from the list. When you're

done, click Add to Suite.

Note

Always select words or phrases from the test case utterance after you enable Test Entities. The test case will fail if you've enabled Test Entities but have not highlighted any words.

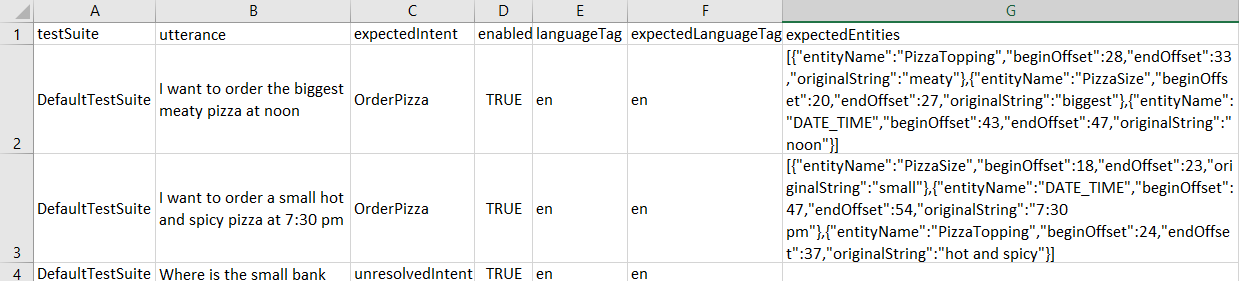

Import Test Cases for Skill-Level Test Suites

testSuite– The name of the test suite to which the test case belongs. ThetestSuitefield in each row of the CSV can have a different test suite name or can be empty.- Test cases with empty

testSuitefields get added to a test suite that you select when you import the CSV. If you don't select a test suite, they will be assigned to Default Test Suite. - Test cases with populated

testSuitefields get assigned to the test suite that you select when you import the CSV only when the name of the selected test suite matches the name in thetestSuitefield. - If a test suite by the name of the one specified in

testSuitefield doesn't already exist, it will be created after you import the CSV.

- Test cases with empty

utterance– An example utterance (required). Maps toqueryin pre-21.04 versions of Oracle Digital Assistant.expectedIntent– The matching intent (required). This field maps toTopIntentin pre-21.04 versions of Oracle Digital Assistant.Tip:

Importing Pre-21.04 Versions of the CSV tells you how to reformat Pre-21.04 CSVs so that you can use them for bulk testing.enabled–TRUEincludes the test case in the test run.FALSEexcludes it.languageTag– The language tag (en, for example). When there's no value, the language detected from the skill's language settings is used by default.expectedLanguageTag(optional) – For multilingual skills, this is the language tag for the language that you want the model to use when resolving the test utterance to an intent. For the test case to pass, this tag must match the detected language.expectedEntities– The matching entities in the test case utterance, represented as an array ofentityNameobjects. EachentityNameidentifies the entity value's position in the utterance using thebeginOffsetandendOffsetproperties. This offset is determined by character, not by word, and is calculated from the first character of the utterance (0-1). For example, theentityNameobject for the PizzaSize entity value of small in I want to order a small pizza is:[{"entityName":"PizzaSize","beginOffset":18,"endOffset":23,"originalString":"small"}, …]

- Click More, then select Import.

- Browse to, then select the CSV.

- Choose the test suite. The test case can only be assigned to the

selected test suite if the

testSuitefield is empty or matches the name of the selected test suite. - Click Upload.

Importing Pre-21.04 Versions of the CSV

query and TopIntent fields, get added to Default

Test Suite only. You can reassign these test cases to other test suites individually by

editing them after you import the CSV, or you can update the CSV to the current format

and then edit before you import it as follows:

- Click More > Import.

- After the import completes, select Default Test Suite, then click More > Export Selected Suite. The exported file will be converted to the current format.

- Extract the ZIP file and edit the CSV. When you've finished, import

the CSV again ( More > Import).

You may need to delete duplicate test cases from the Default Test Suite.

Note

If you upload the same CSV multiple times with minor changes, any new or updated data will be merged with the old: new updates get applied and new rows are inserted. However, you can't delete any utterances by uploading a new CSV. If you need to delete utterances, then you need to delete them manually from the user interface.

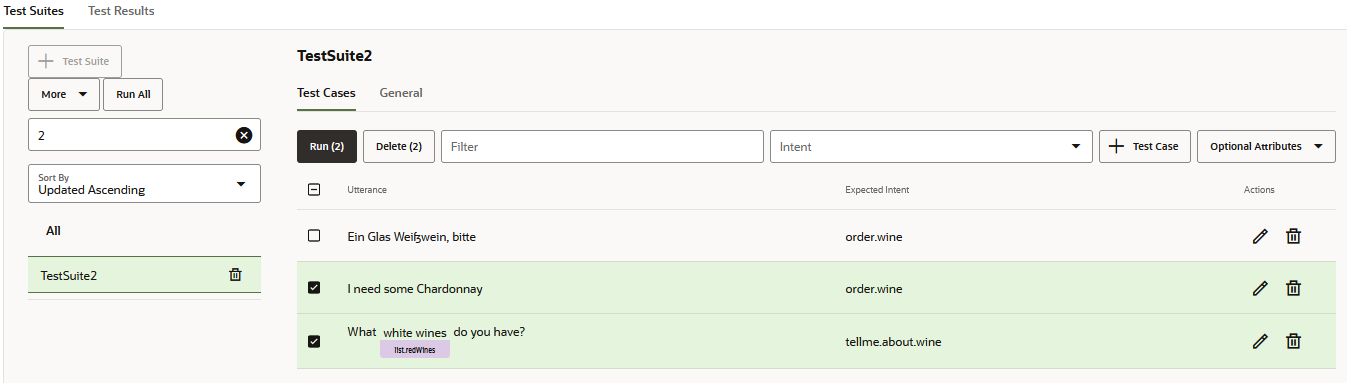

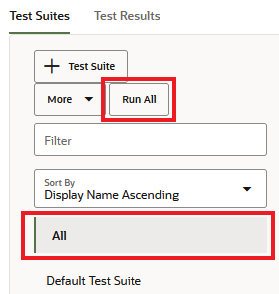

Create Test Runs

Test runs are a compilation of test cases or test suites aimed at evaluating some aspect of the skill's cognition. The contents (and volume) of a test run depends on the capability that you want to test, so a test run might include a subset of test cases from a test suite, a complete test suite, or multiple test suites.

The test cases included in a test run are evaluated against the confidence threshold that's set for the skill. For a test case to pass in the overall test run, it must resolve to the expected intent at, or above, the confidence threshold. If specified the test case must also satisfy the entity value and language-match criteria. By reviewing the test run results, you can find out if changes made to the platform, or to the skill itself, have compromised the accuracy of the intent resolution.

In addition to testing the model, you can also use the test run results to assess the reliability of your testing. For example, results showing that nearly all of the test cases have passed might, on the surface, indicate optimal functioning of the model. However, a review of the passing test cases may reveal that the test cases do not reflect the current training because their utterances are too simple or have significant overlap in terms of the concepts and verbiage that they're testing for. A high number of failed tests, on the other hand, might indicate deficiencies in the training data, but a review of these test cases might reveal that their utterances are paired with the wrong expected intents.

- Click Run All to create a test run for all

of the test cases in a selected test suite. (Or if you want to run all test

suites, select All then click Run

All).

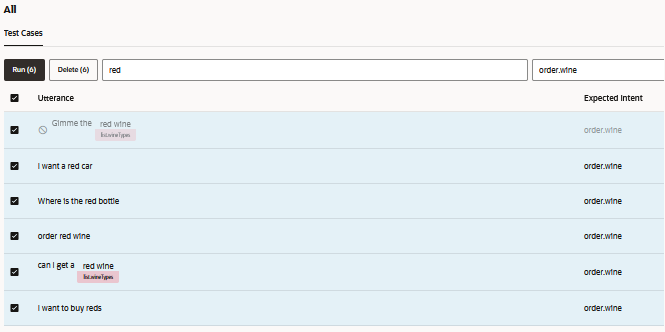

- To create a test run for a selection of test cases within a

suite (or a test run for subset of all test cases if you selected

All), filter the test cases by adding a

string that matches the utterance text and an expected intent. Select

the utterance(s), then click Run.

- To exclude test suite from the test run, first select the test suite,

open the General tab, and then switch off Enable Test

Suite.

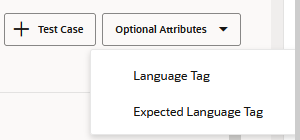

- For multilingual skills, you can also filter by

Language Tag and Expected

Language options (accessed through Optional

Attributes).

- To create a test run for a selection of test cases within a

suite (or a test run for subset of all test cases if you selected

All), filter the test cases by adding a

string that matches the utterance text and an expected intent. Select

the utterance(s), then click Run.

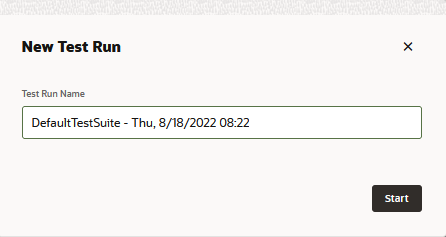

- Enter a test run name that reflects the subject of test. This is an optional step.

- Click Start

- Click Test Results, then select the test

run.

Tip:

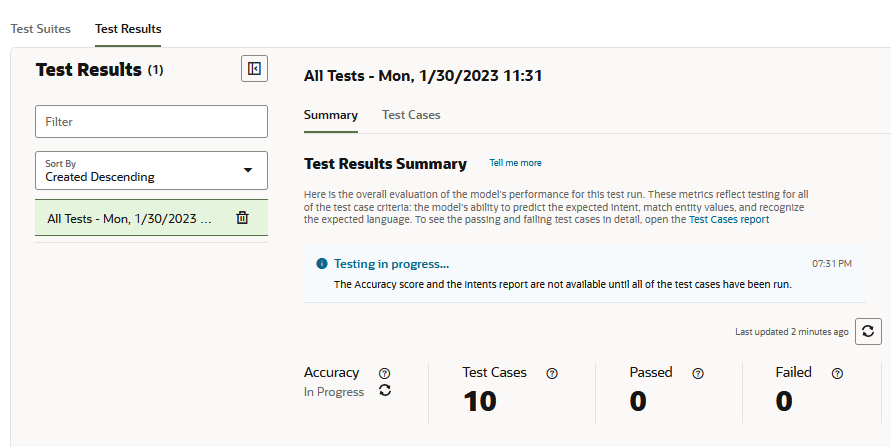

Test runs that contain a large number of test cases may take several minutes to complete. For these large test runs, you may need to click Refresh periodically until the testing completes. A percentage replaces the In Progress status for the Accuracy metric and the Intents report renders after all of the test cases have been evaluated.

- Review the test run reports. For example, first review the high-level metrics for the test run provided by the Overview report. Next, validate the test results against the actual test cases by filtering the Test Cases report, which lists all of the test cases included in the test run, for passed and failed test cases. You can then examine the individual test case results. You might also compare the Accuracy score in the Overview report to the Accuracy score in the Intents report, which measures the model's ability to predict the correct intents. To review the test cases listed in this report, open the Test Cases report and filter by intents.

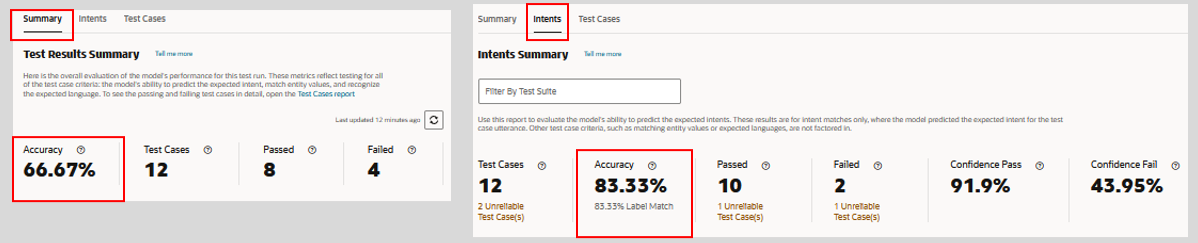

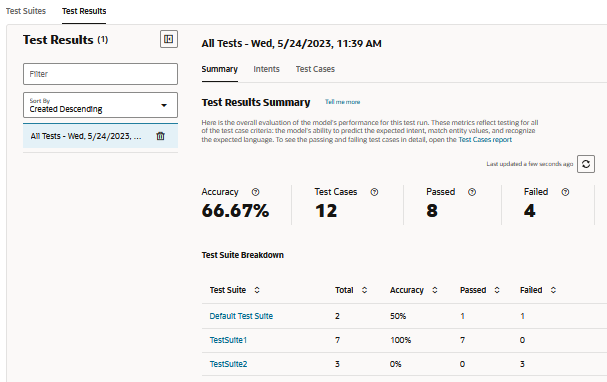

Test Run Summary Report

The Summary report provides you with an overall assessment of how successfully the

model can handle the type of user input that's covered in the test run. For the test

suites included in the test run, it shows you the total number of test cases that have

been used to evaluate the model, and from that total, both the number of test cases

(both reliable and unreliable) that failed along with the number of reliable and

unreliable test cases that passed. The model's overall accuracy – its ability to predict

expected intents at or above the skill's confidence level, recognize entity values, and

resolve utterances in the skill's language – is gauged by the success rate of the

passing tests in the test run.

Description of the illustration test-run-test-results-summary.png

Summary Report Metrics

- Accuracy – The model's accuracy in terms of

the success rate of the passing test cases (the number of passing test cases

compared to the total number of test cases included in the test run).

Note

Disabled test cases are not factored into the Accuracy score. Neither are the tests that failed because of errors. Any test that failed is instead added to the Failed count.A low Accuracy score might indicate the test run is evaluating the model on concepts and language that are not adequately supported by the training data. To increase the Accuracy score, retrain the model with utterances that reflect the test cases in the test run.

This Accuracy metric applies to the entire test run and provides a separate score from the Accuracy metric in the Intents report. This metric is the percentage of test cases where the model passed all of the test case criteria. The Accuracy score in the Intents report, on the other hand, is not end-to-end testing. It is the percentage of test cases where the model had only to predict the expected intent at, or above the skill's confidence threshold. Other test case criteria (such as enity value or skill language) are not factored in. Given the differing criteria of what a passing test case means for these two reports, their respective Accuracy scores may not always be in step. The intent match Accuracy score may be higher than the overall test run score when the testing data is not aligned with the training data. Retraining the model with utterances that support the test cases will enable it to predict the expected intents with higher confidence that will, in turn, increase the Accuracy score for the test run.

Note

The Accuracy metric is not available until the test run has completed and is not available for test runs that were completed when the skill ran on pre-22.12 versions of the Oracle Digital Assistant platform. - Test Cases – The total number of test cases (both reliable and unreliable test cases) included in the test run. Skipped test cases are included in this tally, but they are not considered when computing the Accuracy metric.

- Passed – The number of test cases (both reliable and unreliable) that passed by resolving to the intent at the confidence threshold and by matching the selected entity values or language.

- Failed – The number of test cases (bot

reliable and unreliable) that failed to resolve to the expected intent at the

confidence threshold and failed to match the selected entity values or

language.

To review the actual test cases behind the Passed and Failed metrics in this report, open the Test Cases report and then apply its Passed or Failed filters.

Description of the illustration test-runs-intent-report.png

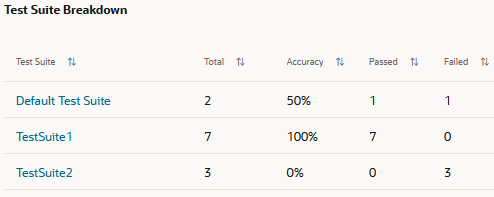

Test Suite Breakdown

The Test Suite Breakdown table lists test suites included in the test run and

their individual statistics. You can review the actual test cases belonging to a test

suite by clicking the link in the Test Suite column.

Description of the illustration test-suite-breakdown.png

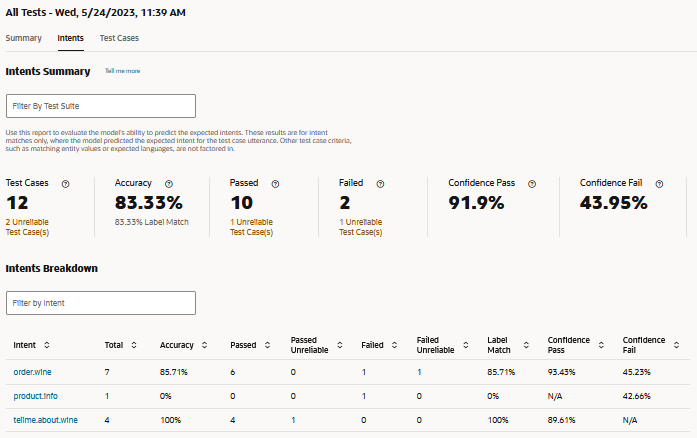

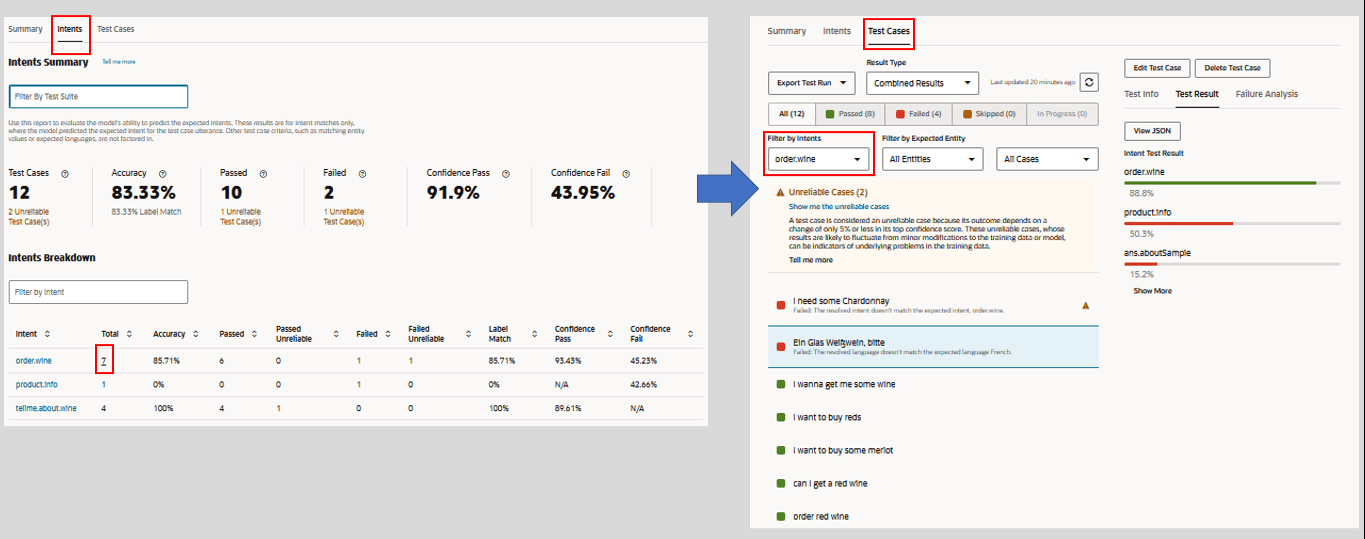

Intents Report

The metrics in this report track the model's label matches throughout the test run's test cases. This is where the model correctly predicts the expected intent for the test case utterance. Within the context of this report, accuracy, passing, and failing are measured in terms of the test cases where the model predicted the correct expected intent at, or above, the confidence threshold. Other criteria considered in the Summary report, such as entity value matches or skill language are not considered. As a result, this report provides you with a different view of model accuracy, one that helps you to verify if the current training enables the model to consistently predict the correct intents.

This report is not available for test runs that were completed when the skill ran on a pre-22.12 version of the Oracle Digital Assistant platform.

Intents Report Metrics

- Test Cases – The number of test cases

included in this test run. This total includes both reliable and unreliable test

cases. Skipped test cases are not included in this tally.

Tip:

The unreliable test case links for the Test Cases, Passed and Failed metrics open the Test Cases report filtered by unreliable test cases. This navigation is not available when you filter the report by test suite. - Accuracy – The model's accuracy in matching

the expected intent at, or above, the skill's confidence threshold across the

test cases in this test run. The Label Match submetric

represents the percentage of test cases in the test run where the model

correctly predicted the expected intent, regardless of the confidence score.

Because Label Match factors in failing test cases along with passing test cases,

its score may be higher than the Accuracy score.

You can compare this Accuracy metric with the Accuracy metric from the Summary report. When the Accuracy score in Summary report is low, you can use this report to quickly find out if the model's failings can be attributed to its inability to predict the expected intent. When the Accuracy score in this report is high, however, you can rule out label matching as root of the problem and, rather than having to heavily revise the training data to increase the test run's Accuracy score, you can instead focus adding utterances that reflect the concepts and language in the test case utterances.

- Passed – The number of test cases (reliable and unreliable) where the model predicted the expected intent at the skill's confidence threshold.

- Failed – The number of test cases (reliable and unreliable) where the model predicted the expected intent below the skill's confidence threshold.

- Confidence Pass – An average of the confidence scores for all of the test cases that passed in this test run.

- Confidence Fail – An average of the confidence scores for all of the test cases that failed in this test run.

When you filter the Intents report by test suite, access to the Test Cases report from the unreliable test case links in the Test Cases, Passed, and Failed tiles is not available. These links become active again when you remove all entries from the Filter by Test Suite field.

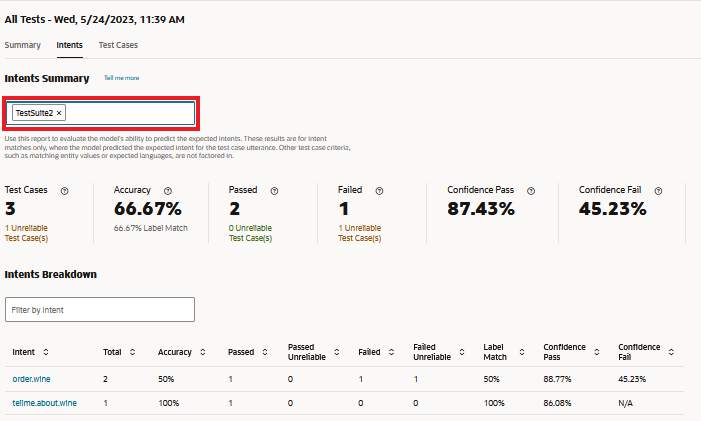

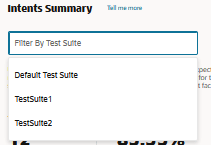

Filter by Test Suite

The report adjusts the metrics for each test suite that you add (or subsequently remove). It tabulates the intent matching results in terms of the number of enabled test cases that belong to the selected test suite.

You can't filter by test suites that were run on a platform prior to Version 23.06. To include these test suites, you need to run them again after you upgrade to Versions 23.06 or higher.

Filtering by test suite disables navigation to the Test Cases report from the unreliable test cases links in the Test Cases, Passed, and Failed tiles. The links in the Total column of the Intents Breakdown are also disabled. All of these links become active again after you remove all of the entries from the Filter by Test Suite field.

Intents Breakdown

The Filter by Intent field changes the view of the Intents Breakdown table but does not change the report's overall metrics. These metrics reflect the entries (or lack of entries) in the Filter by Test Suite field.

- Intent – The name of the expected intent.

- Total – The number of test cases,

represented as a link, for the expected intent. You can traverse to the Test

Cases report by clicking this link.

Note

You can't navigate to the Test Cases report when you've applied a test suite filter to this report. This link becomes active again when you remove all entries from the Filter by Test Suite field. - Accuracy – The percentage of test cases that resulted in label matches for the expected intent at, or above the skill's confidence threshold.

- Passed – The number of test cases (including unreliable test cases) where the model predicted the expected intent at, or above, the skill's confidence threshold.

- Passed - Unreliable – The number test cases where the model predicted the expected intent at 5% or less above the skill's confidence threshold.

- Failed – The number of test cases in the test run that failed because the model predicted the expected intent below the skill's confidence threshold.

- Failed - Unreliable – The number test cases that failed because the model's confidence in predicting the expected intent fell 5% below the skill's confidence threshold. These test cases can factor into the

- Label Match – The number of test cases where the model successfully predicted the expected intent, regardless of confidence level. Because it factors in failed test cases, the Label Match and Accuracy scores may not always be in step with one another. For example, four passing test cases out of five results in an 80% Accuracy score for the intent. However, if the model predicted the intent correctly for the one failing test case, then Label Match would outscore Accuracy by 20%.

- Confidence Pass – An average of the confidence scores for all of the test cases that successfully matched the expected intent.

- Confidence Fail – An average of the

confidence scores for all of the test cases that failed to match the expected

intent.

Tip:

To review the actual test cases, open the Test Cases report and the filter by the intent.

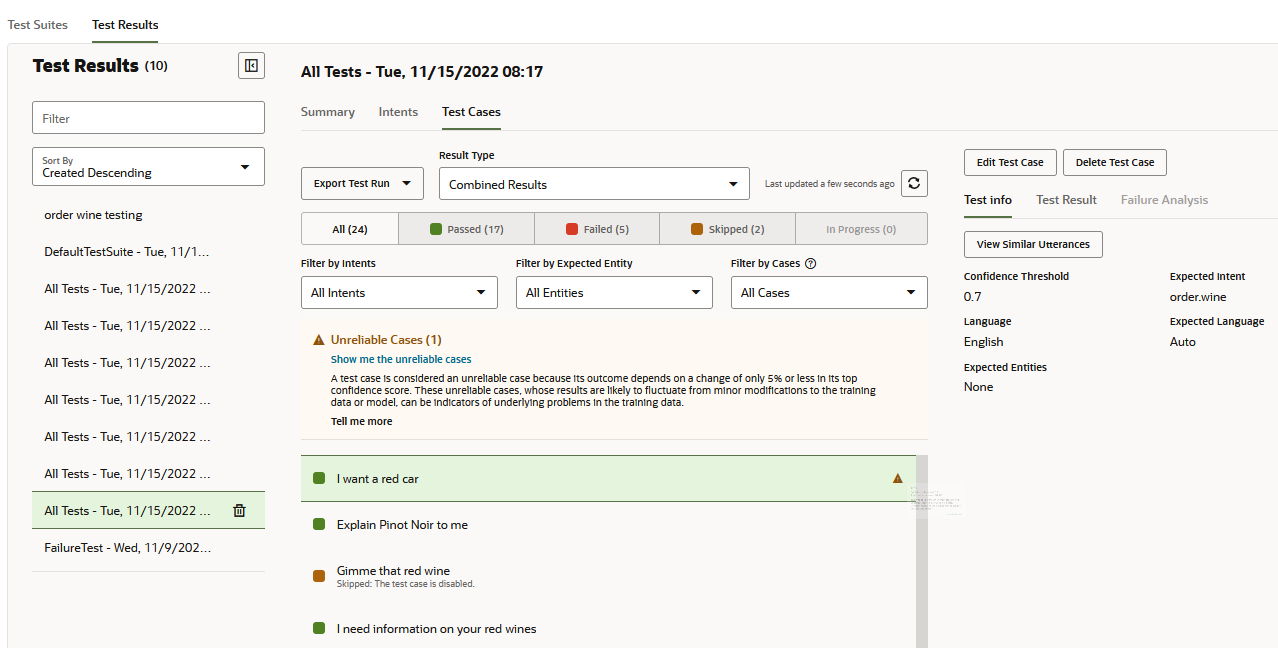

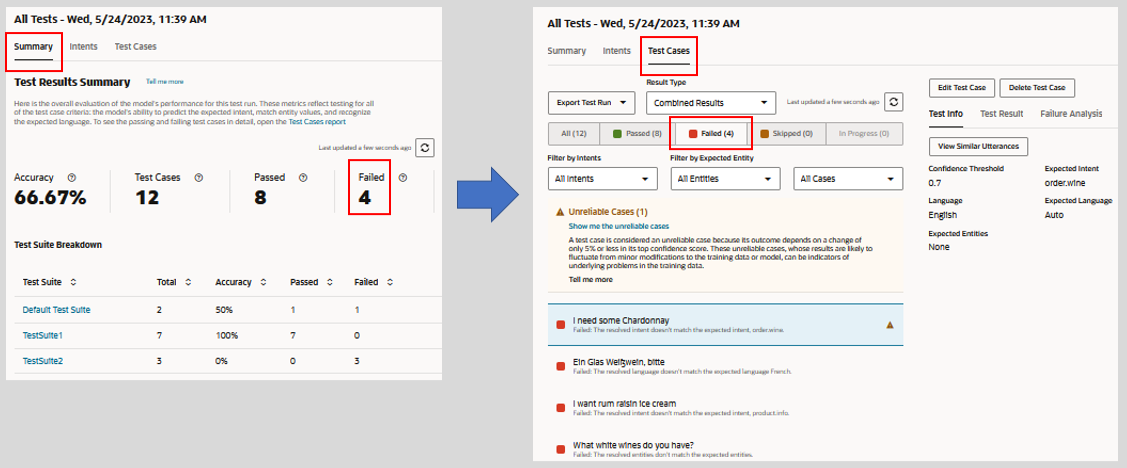

Test Cases Report

- You can filter the results by clicking All,

Passed (green), or Failed

(red). The test cases counted as skipped include both disabled test cases and

test cases where the expected intent has been disabled.

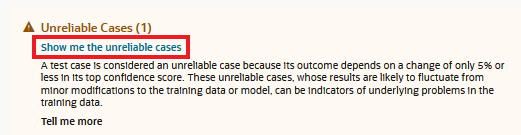

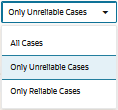

You can filter the results by unreliable test cases by either clicking Show me unreliable cases in the warning message, or by selecting Only Unreliable Cases filter. - If needed, filter the results for a specific intent or entity or by reliable or unreliable test cases.

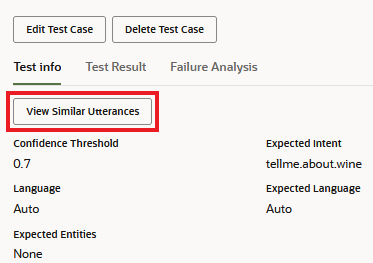

- For unreliable and failed test cases, click View Similar

Utterances (located in the Test Info page) to find out if the

test case utterance has any similarity to the utterances in the training

set.

- Check the following results:

- Test Info – Presents the test case overview, including the target confidence threshold, the expected intent, and the matched entity values.

- Test Result – The ranking of intent by confidence level. When present, the report also identifies the entities contained in the utterance by entity name and value. You can also view the JSON object containing the full results.

- Failure Analysis – Explains why the test case failed. For example, the actual intent is not the expected intent, the labeled entity value in the test case doesn't match the resolved entity, or the expected language is not the same as the detected language.

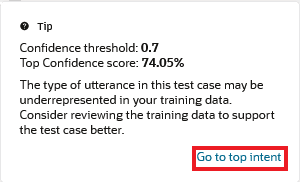

Unreliable Test Cases

Some test cases cannot provide consistent results because they resolve within 5% or less of the Confidence Threshold. This narrow margin makes these test cases unreliable. When the skill's Confidence Threshold is set a 0.7, for example, a test case that's passing at 74% may fail after you've made only minor modifications to your training data or because the skill has been upgraded to a new version of the model. The fragility of these test cases may indicate that the utterances that they represent in the training data may be too few in number and that you may need to balance the intent's training data with similar utterances.

- Run the test suite. Then click Test Results

and select the test run. The unreliable test cases are sorted at the beginning

of the test run results and are flagged with warnings

.

. - To isolate the unreliable test cases:

- Click Show me the unreliable cases in

the message.

- Select Only Unreliable Cases from the

Filter by Cases menu.

- Click Show me the unreliable cases in

the message.

- To find the proximity of the test case's top-ranking intent to the

Confidence Threshold, open the Test Result window. For a comparison of the

top-ranking confidence score to the Confidence Threshold, click

.

. - If you need to supplement the training data for the top-ranking

intent, click Go to top intent in the warning

message.

- If you want to determine the quantity of utterances that are

represented by the test case in the training data, click View Similar

Utterances.

You can also check if any of the utterances most similar to the test case utterance are also anomalies in the training set by running the Anomalies Report.

Exported Test Runs

Test runs are not persisted with with the skill, but you can download them to your system for analysis by clicking Export Test Run. If the intents no longer resolve the user input as expected, or if platform changes have negatively impacted intent resolution, you can gather the details for an SR (service request) using the logs of exported test runs.

Failure Testing

Failure (or negative) testing enables you to bulk test utterances that should never be resolved, either because they result in unresolvedIntent, or because they only resolve to other intents below the confidence threshold for all of the intents.

- Specify unresolvedIntent as the Expected Intent for all of the test

cases that you expect to be unresolved. Ideally, these "false" phrases will

remain unresolved.

- If needed, adjust the confidence threshold when creating a test run

to confirm that the false phrases (the ones with

unresolvedIntentas their expected intent) can only resolve below the value that you set here. For example, increasing the threshold might result in the false phrases failing to resolve at the confidence level to any intent (including unresolvedIntent), which means they pass because they're considered unresolved. - Review the test results, checking that the test cases passed by matching unresolvedIntent at the threshold, or failed to match any intent (unresolvedIntent or otherwise) at the threshold.

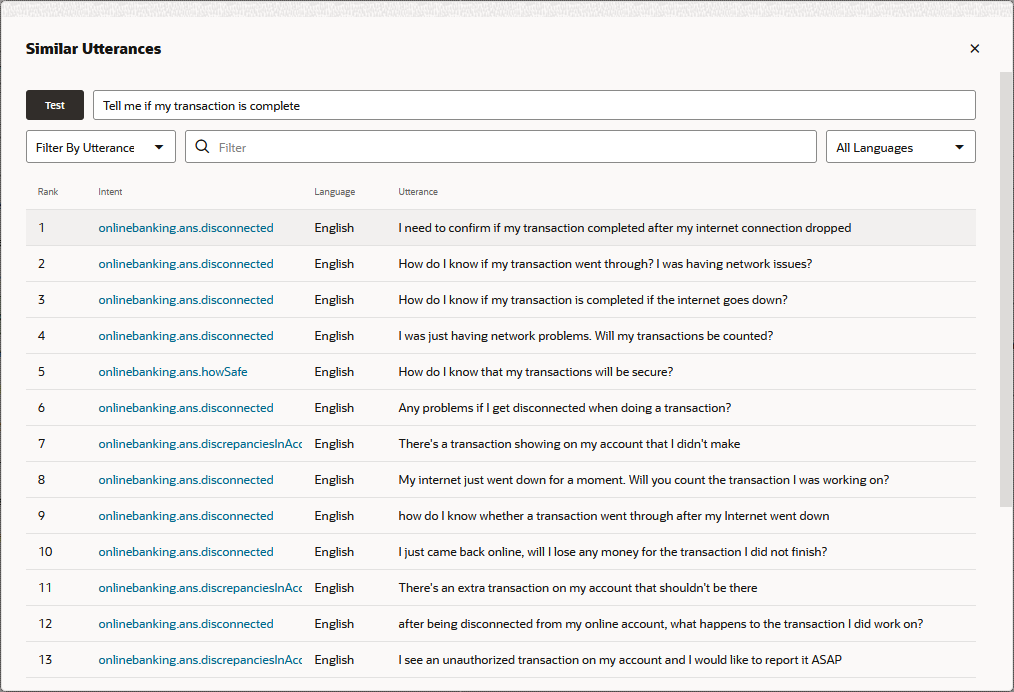

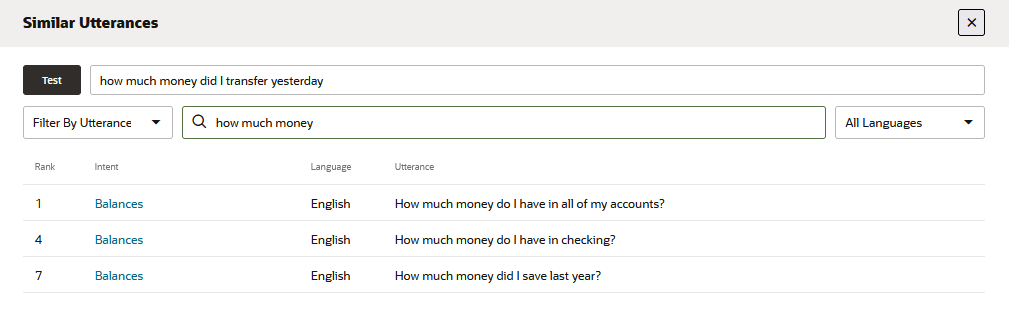

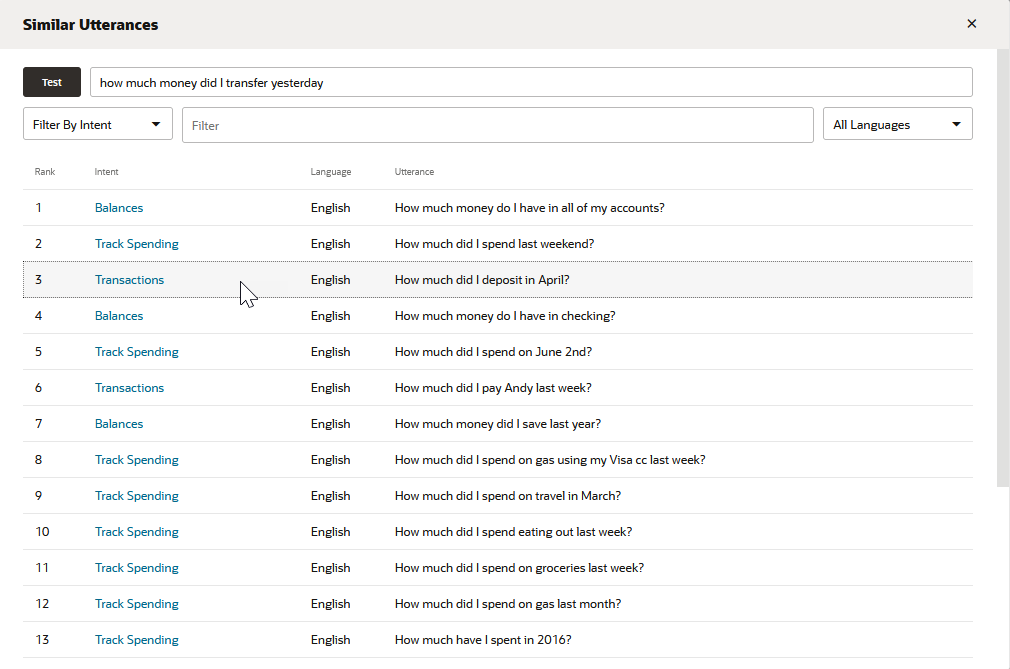

Similar Utterances

You can find out how similar your test phrase is to the utterances in the training

corpus by clicking View Similar Utterances. This tool provides

you with an added perspective on the skill's training data by showing you how similar

its utterances are to the test phrase, and by extension, how similar the utterances are

to one another across intents. Using this tool, you can find out if the similarity of

the test phrase to utterances belonging to other intents is the reason why the test

phrase is not resolving as expected. It might even point out where training data belongs

to the wrong intent because if its similarity to the test phrase.

Description of the illustration similar-utterance-report-all-intents.png

The list generated by this tool ranks 20 utterances (along with their associated intents) that are closest to the test phrase. Ideally, the top-ranking utterance on this list – the one most like the test phrase – belongs to the intent that's targeted for the test phrase. If the closest utterance that belongs to the expected intent is further down, then a review of the list might provide a few hints as to why. For example, if you're testing a Transactions intent utterance, how much money did I transfer yesterday?, you'd expect the top-ranking utterance to likewise belong to a Transactions intent. However, if this test utterance is resolving to the wrong intent, or resolving below the confidence level, the list might reveal that it has more in common with highly ranked utterances with similar wording that belong to other intents. The Balances intent's How much money do I have in all of my accounts?, for example, might be closer to the test utterance than the Transactions intent's lower-ranked How much did I deposit in April? utterance.

You can only use this tool for skills trained on Trainer Tm (it's not available for skills trained with Ht).

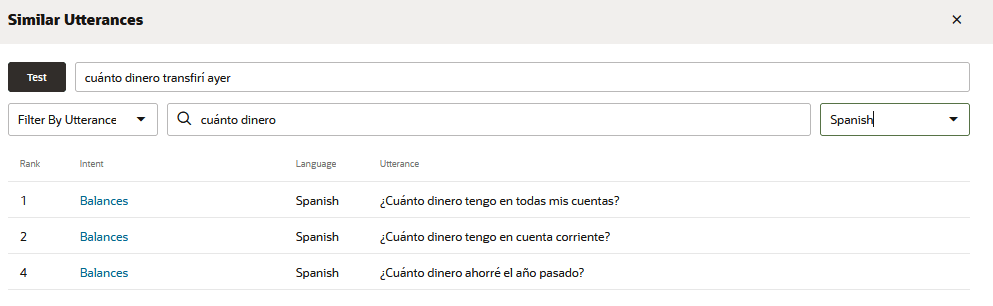

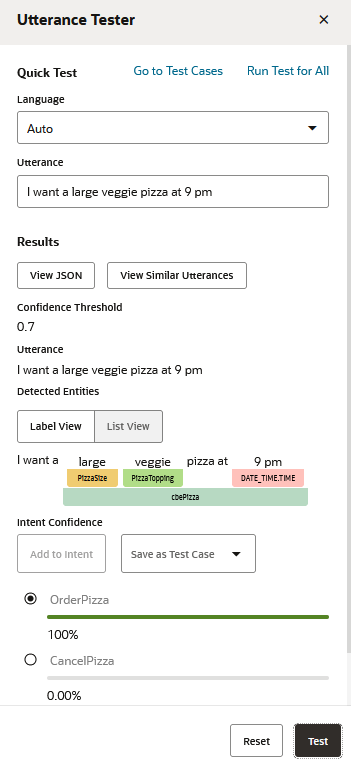

- Filter by Intent – Returns 20 utterances that

are closest to the test utterance that belong to the selected intent (or

intents).

- Filter by Utterance – Returns 20 of the of

utterances closest to the test utterance that contain a word or phrase.

- Language – For multi-lingual skills, you can query and filter the

report by selecting a language.

Applying these filters does not change the rankings, just the view. An utterance ranked third, for example, will be noted as such regardless of the filter. The report's rankings and contents change only when you've updated the corpus and retrained the skill with Trainer Tm.